-

-

Notifications

You must be signed in to change notification settings - Fork 2.1k

en use context compress

Starting from v4.11.0, AstrBot introduced an automatic context compression feature.

AstrBot automatically compresses the context when the conversation context reaches 82% of the maximum context window length of the conversation model being used, ensuring that as much conversation content as possible is retained without losing key information.

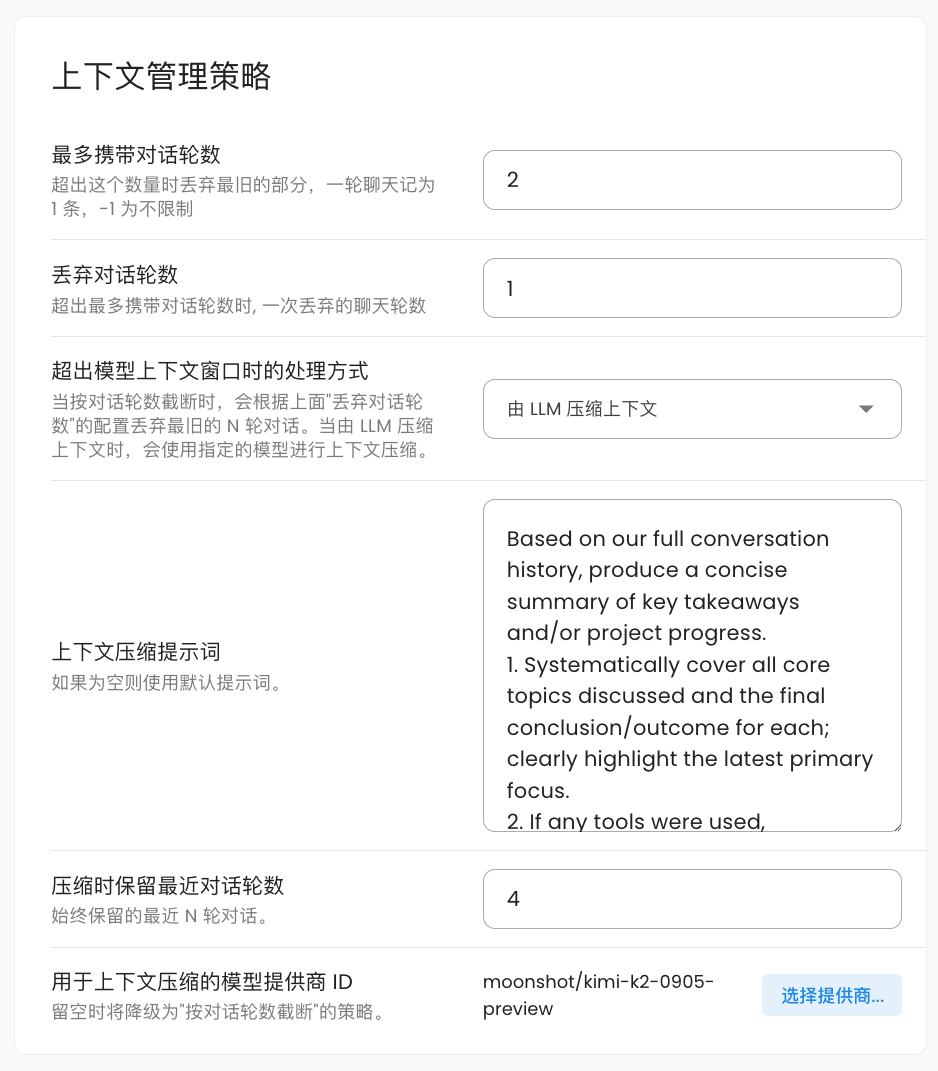

There are currently two compression strategies:

- Truncate by conversation rounds. This strategy simply removes the earliest conversation content until the context length meets the requirements. You can specify the number of conversation rounds to discard at once, with a default of 1 round. This is the default strategy.

- LLM-based context compression. This strategy calls the model itself to summarize and compress the conversation content, thereby retaining more key information. You can specify the conversation model to use for compression; if not selected, it will automatically fall back to the "truncate by conversation rounds" strategy. You can set the number of recent conversation rounds to retain during compression, with a default of 4. You can also customize the prompt used during compression. The default prompt is:

Based on our full conversation history, produce a concise summary of key takeaways and/or project progress.

1. Systematically cover all core topics discussed and the final conclusion/outcome for each; clearly highlight the latest primary focus.

2. If any tools were used, summarize tool usage (total call count) and extract the most valuable insights from tool outputs.

3. If there was an initial user goal, state it first and describe the current progress/status.

4. Write the summary in the user's language.

After one round of compression, AstrBot will perform a secondary check to verify if the current context length meets the requirements. If it still doesn't meet the requirements, it will adopt a halving strategy, cutting the current context content in half until the requirements are met.

- AstrBot will invoke the compressor for checking before each conversation request.

- In the current version, AstrBot does not perform context compression during tool invocations. We will support this feature in the future, so stay tuned.

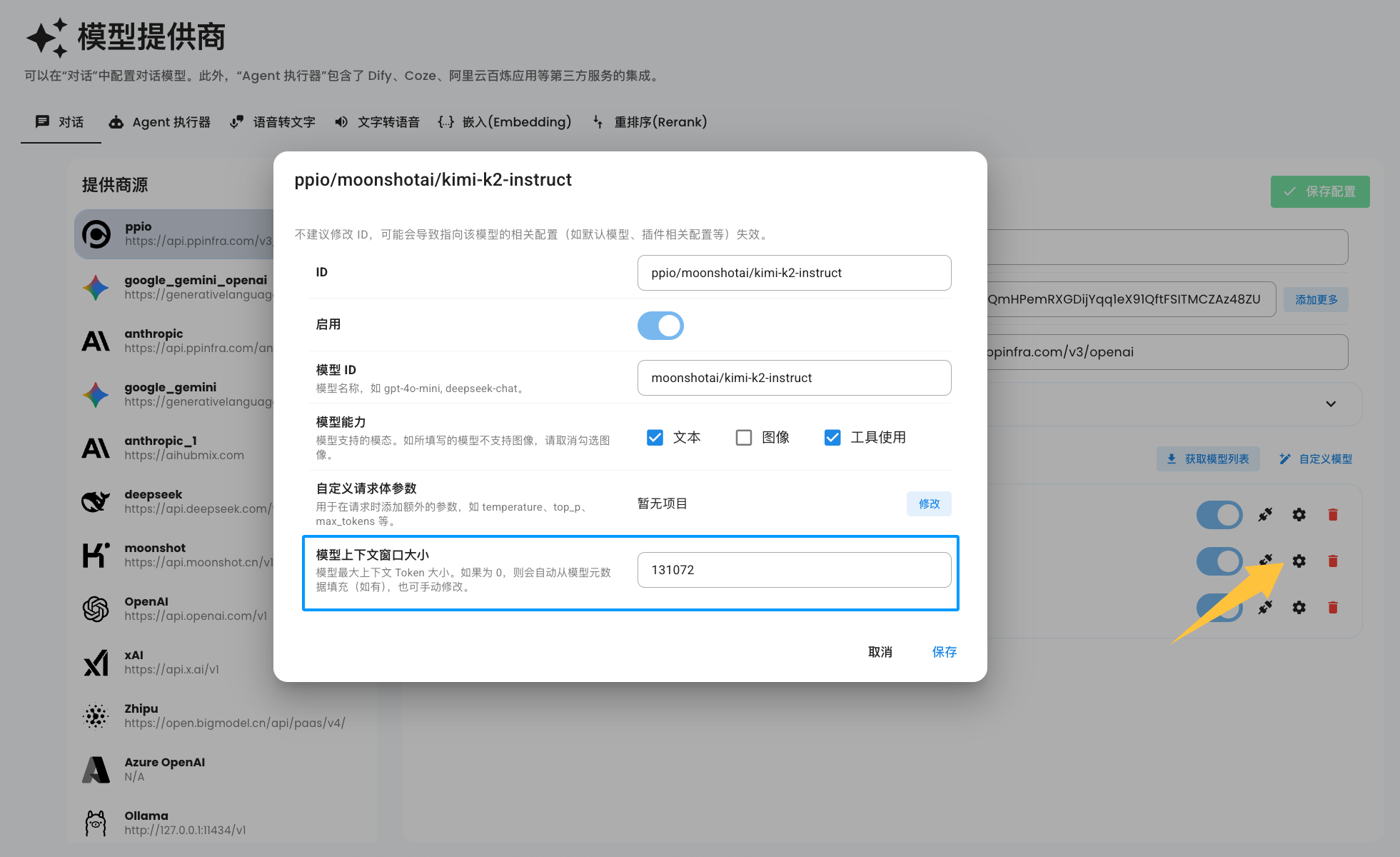

By default, when you add a model, AstrBot automatically retrieves the model's context window size from the API provided by MODELS.DEV based on the model's ID. However, due to the wide variety of models and the fact that some providers even modify the model IDs, AstrBot cannot automatically infer the context window size for all models you add.

You can manually set the model's context window size in the model configuration, as shown in the image below:

Note

If you don't see the configuration option shown in the image above, please delete the model and re-add it.

When the model context window size is set to 0, AstrBot will still automatically retrieve the model's context window size from MODELS.DEV for each request. If it remains 0, context compression will not be enabled for that request.

- 首页

- 文档入口

- Top Level

- community events

- deploy

- dev

- others

- platform

- 接入 OneBot v11 协议实现

- 接入钉钉 DingTalk

- 接入 Discord

- 接入 Kook

- 接入飞书

- 接入 LINE

- 接入 Matrix

- 接入 Mattermost

- 接入 Misskey 平台

- 接入 QQ 官方机器人平台

- 通过 QQ官方机器人 接入 QQ (Webhook)

- 通过 QQ官方机器人 接入 QQ (Websockets)

- 接入 Satori 协议

- 接入 server-satori (基于 Koishi)

- 接入 Slack

- 接入消息平台

- 接入 Telegram

- 接入 VoceChat

- AstrBot 接入企业微信

- 接入企业微信智能机器人平台

- AstrBot 接入微信公众平台

- 接入个人微信

- providers

- use

- Home

- Docs Entry

- Top Level

- config

- deploy

- Deploy AstrBot on 1Panel

- Deploy AstrBot on BT Panel

- Deploy AstrBot on CasaOS

- Deploy AstrBot from Source Code

- Community-Provided Deployment Methods

- Deploy via Compshare

- Deploy AstrBot with Docker

- Deploy AstrBot with Kubernetes

- Deploy AstrBot with AstrBot Launcher

- Other Deployments

- Package Manager Deployment (uv)

- Installation via System Package Manager

- Preface

- dev

- ospp

- others

- platform

- Connect OneBot v11 Protocol Implementations

- Connect to DingTalk

- Connecting to Discord

- Connect to KOOK

- Connecting to Lark

- Connecting to LINE

- Connecting to Matrix

- Connecting to Mattermost

- Connecting to Misskey Platform

- Connect QQ Official Bot

- Connect QQ via QQ Official Bot (Webhook)

- Connect QQ via QQ Official Bot (Websockets)

- Connect to Satori Protocol

- Connect server-satori (Koishi)

- Connecting to Slack

- Messaging Platforms

- Connecting to Telegram

- Connect to VoceChat

- Connect AstrBot to WeCom

- Connect to WeCom AI Bot Platform

- Connect AstrBot to WeChat Official Account Platform

- Connect Personal WeChat

- providers

- 接入 302.AI

- Agent Runners

- Built-in Agent Runner

- Connect to Coze

- Connect to Alibaba Cloud Bailian Application

- Connect to DeerFlow

- Connect to Dify

- Connect AIHubMix

- coze

- dashscope

- dify

- 大语言模型提供商

- NewAPI

- 接入 PPIO 派欧云

- 接入 LM Studio 使用 DeepSeek-R1 等模型

- Integrating Ollama

- Connecting to SiliconFlow

- Connecting Model Services

- Connecting to TokenPony

- use

- Agent Runner

- Agent Sandbox Environment ⛵️

- astrbot sandbox

- Docker-based Code Interpreter

- Built-in Commands

- Computer Use

- Context Compression

- Custom Rules

- Function Calling

- AstrBot Knowledge Base

- MCP

- AstrBot Star

- Proactive Capabilities

- Anthropic Skills

- Agent Handoff and SubAgent

- Unified Webhook Mode

- Web Search

- WebUI